An arithmetic unit, or ALU, enables computers to perform mathematical operations on binary numbers. They can be found at the heart of every digital computer and are one of the most important parts of a CPU (Central Processing Unit). This note explores their basic function, anatomy and history.

Understanding the machine

If you could take a computer and rip out its heart - what would it look like? This might sound like a strange notion, but is it something we could actually do? Or does the question even make sense?

It's hard to even conceptualise what a computer is these days. Most of us have one form or another sitting in our pockets, strapped to our wrists, or sitting on our desk. They all look completely different and are used for various purposes - do they even work in the same way?

Well, it may surprise you that these devices all use the same fundamental mechanisms to operate. They all stem from the same primordial digital DNA and they all share the same perpetual heartbeat - even if some beat faster than others.

It may also shock some to learn that computers are just dumb machines controlled through a stream of binary instructions being repetitively manipulated by soulless mechanisms. There really is nothing magic or smart about them - regardless of what Siri might tell you.

By definition, a computer, or ‘computational machine’, is a piece of hardware that performs general purpose calculations based on a set of stored instructions. In slightly simpler terms, a computer is a binary calculator on steroids - and one which operates through a repetitive process called the 'fetch-decode-execute' cycle.

Perpetual mechanisms

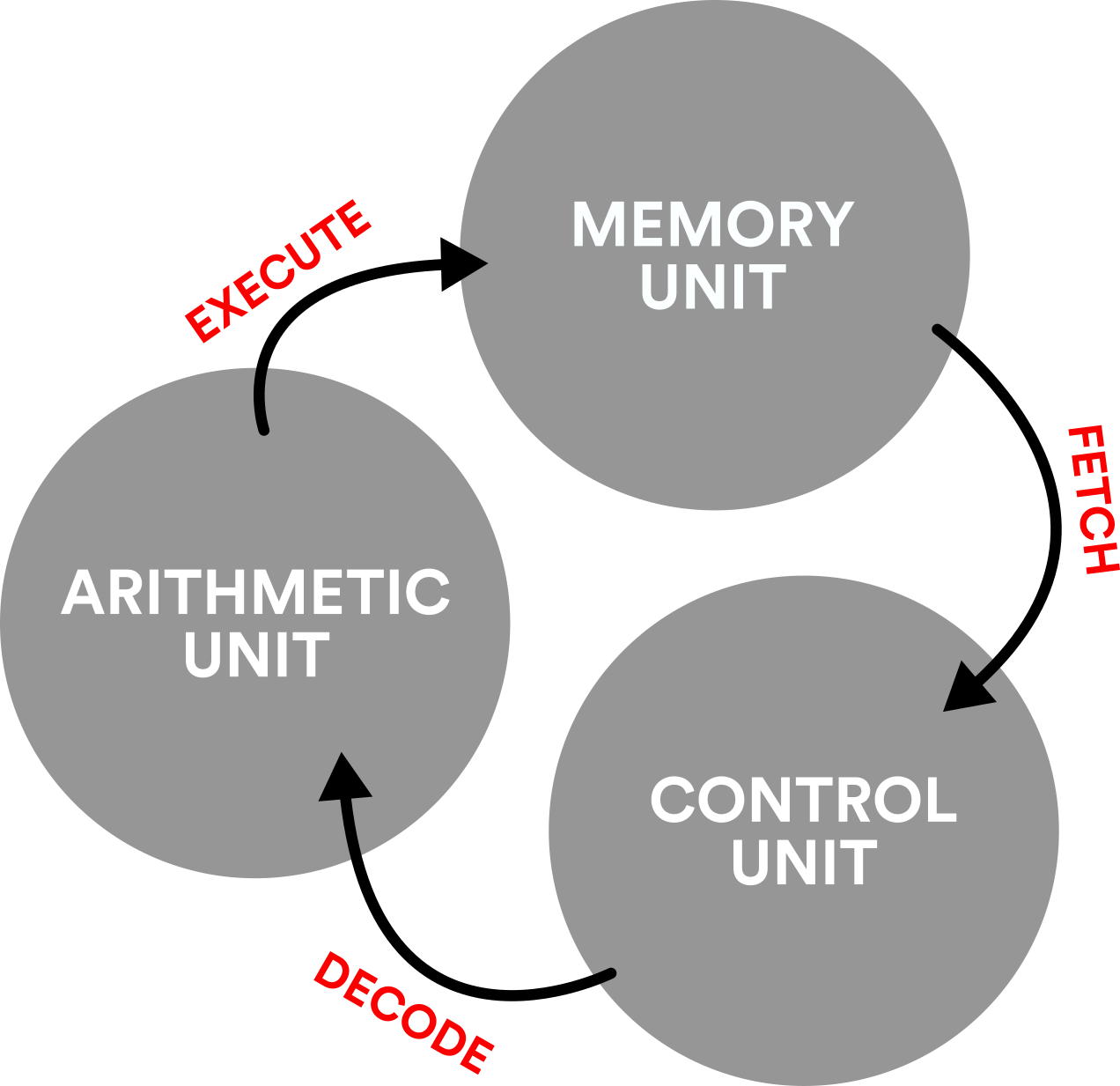

Fetch-decode-execute refers to a computational process that continually fetches instructions from a memory store, decodes them into operations and executes them to perform a calculation. And it is these simple steps which give rise to the complex (and seemingly magical) behaviours we expect from modern computing machines!

The fetch-decode-execute process can be further explained by linking each cycle step (FETCH / DECODE / EXECUTE) with three hardware subsystems: a memory unit, a control unit, and an arithmetic unit.

FETCH (performed by a memory unit)

A memory unit is the part of a computational machine that contains the machine instructions or data for performing general purpose calculations. This subsystem allows stored instructions or data to be accessed or fetched during a program’s execution.

DECODE (performed by a control unit)

The control unit is responsible for automating and sequencing the fetch-decode-execute cycle – you can think of it as a system ‘conductor’. It also decodes instructions and makes sure the correct system operations are consequently performed.

EXECUTE (performed by an arithmetic unit)

An arithmetic unit is a hardware subsystem that performs arithmetic operations on binary inputs. The simplest arithmetic units execute binary addition and subtraction. More complex AUs can perform multiplication, division and logical bitwise operations. However, those more complex AUs are usually referred to as ALUs: 'Arithmetic Logic Unit'.

Anatomy of an Arithmetic Unit & ALU

An arithmetic unit, or ALU, enables computers to perform mathematical operations on binary numbers. They can be found at the heart of every digital computer and are one of the most important parts of a CPU (Central Processing Unit).

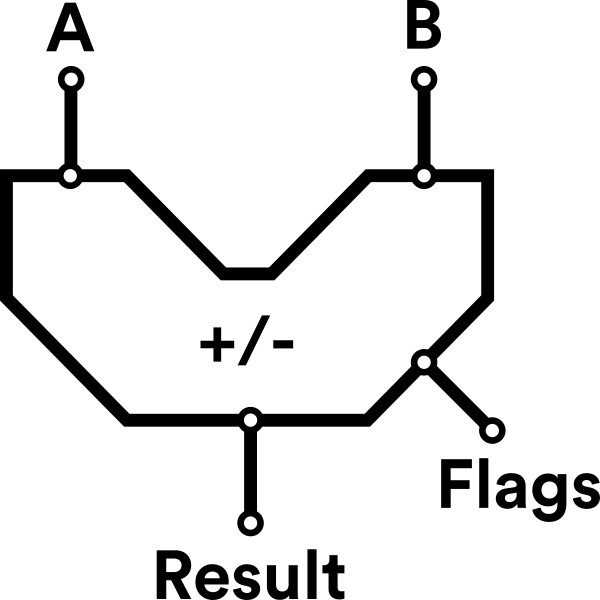

In its simplest form, an arithmetic unit can be thorght of as a simple binary calculator - performing binary addition or subtraction on two inputs (A & B) to output a result (to explore more on how this works check out our note: Binary Addition with Full Adders).

As well as performing basic mathematical operations, the arithmetic unit may also output a series of 'flags' which provide further information about the status of a result: if it is zero, if there is a carry out, or if an overflow has occured. This is important as it enables a computational machine to perform more complex behaviours like conditional branching.

Modern computational machines, however, contain 'arithmetic units' which are far more complex than the one described above. These units may perform additional basic mathematical operations (multiply & divide) and bitwise operations (AND, OR, XOR et al). As such, they are commonly referred to as an ALU (Arithmetic Logic Unit).

ALUs enable mathematical procedures to be performed in an optimized manner, and this can significantly reduce the number of steps required to perform a particular calculation.

Today, most CPUs (Central Processing Unit) contain ALUs which can perform operations on 32 or 64-bit binary numbers. However, AUs & ALUs which process much smaller numbers also have their place in the history of computing.

A short history of Arithmetic Logic Units

The idea of computation being made up from discrete subsystems working together to create complex behaviours isn't a 20th century idea. In fact, stored-program machines were being conceptualised by Charles Babbage over 100 years before Alan Turing's famous formalisation of a 'Universal Turing Machine' in the 1930s.

A little know book 'Fast than Thought' (1953) by B.V.Bowden beautifully describes Babbage's conceptualisation of computation which includes the notion of a control unit, a memory unit and an arithmetic unit! In a nice nod to the mechanical context of an arithmetic unit at the time, Babbage referred to this subsystem as 'The Mill'.

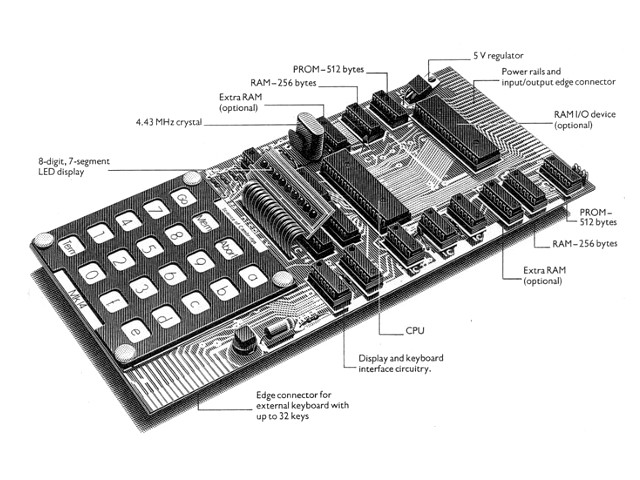

The theoretical foundations of computation saw the light of day through the construction of early digital computers. Machines such as the MOSAIC computer, which ran its first program in (circa) 1953, comprised of over 6,480 electronic valves and occupied the space of four rooms! The image below shows a picture of its 'Arithmetic Rack', which was one of the earliest arithmetic units. It operated at the core of the computer until the machine was decommissioned in the early 1960s. (Note here the control rack too. The memory 'store' was housed in a separate room).

In the exploration of early digital computers it’s also worth mentioning EDSAC 2 (operational 1958), which was the first computer to have a microprogrammed control unit. For seasoned ALU spotters it is worth visiting the 'Centre for Computing History' in Cambridge which houses a part of the Arithmetic Logic Unit from this machine:

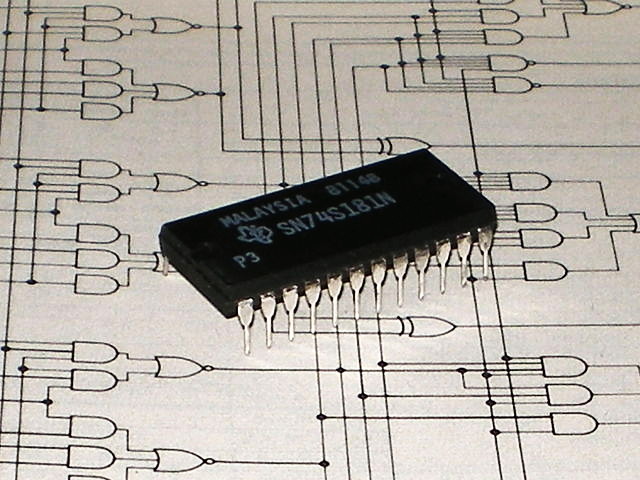

From the 1960s computers were shrinking in size considerably thanks to the invention of integrated circuits which replaced the vacuum tube technology used in early computers. In 1970 Texas Instruments introduced the seminal 74181 TTL IC - a 4-bit ALU - which simplified the design of minicomputers. It performed arithmetic operations (addition and subtraction) and logical operations (AND, OR, XOR). It was to become pivotal in the history of ALU design and computing technology, being used in famous computers such as the PDP-11.

Many regard the 74181 TTL IC as a classic chip - even if it is no longer manufactured. Its demise, however, signals the rise of CPUs, where the subsystems of computers are miniaturised and subsumed in the silicon slices of modern microprocessor technology.

Learn more:

Today you can no longer actually see or hold a modern ALU in your hand. And the simple mechanisms which drive everyday computation are now being lost and forgotten by the march of miniaturisation!

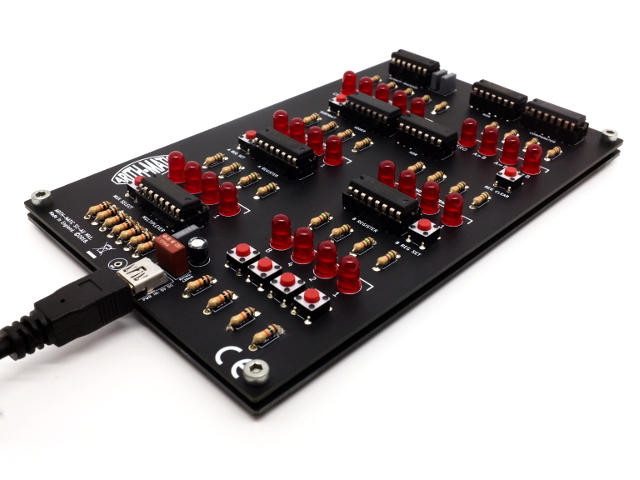

This is why, though our DIY 4-bit Arithmetic Unit, ARITH-MATIC aims to revive the physical and visible connections we once had with the long lost predecessors of modern digital computing.

To keep up with the latest ARITH-MATIC news, kit releases and blog posts, follow us on Twitter and Facebook.